The future of data integration

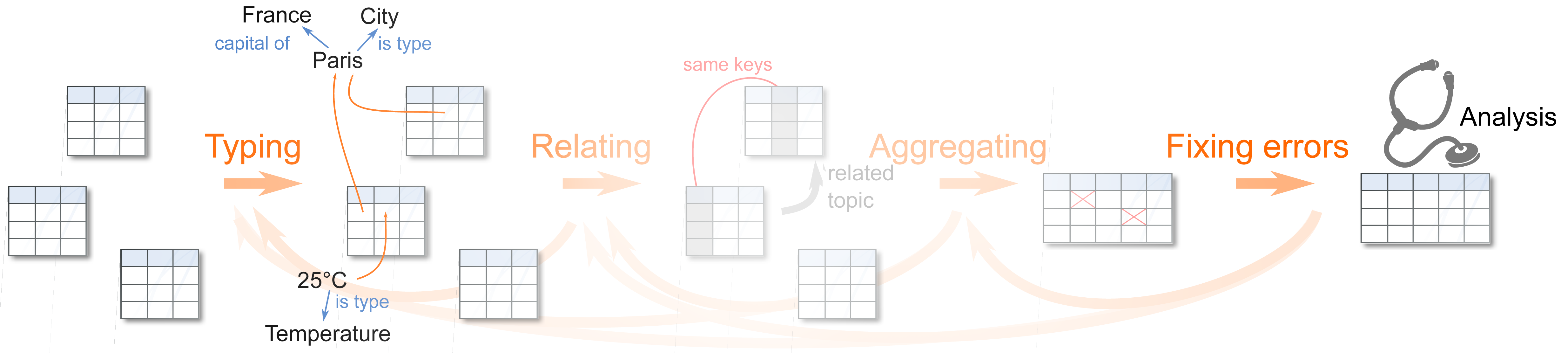

Data integration and data cleaning, ie going from the raw data to the statistical analysis, is a major stumbling block in data science.

DirtyData is an ambitious research project uniting multiple big actors of the French public and private research around this problem.

Two research axis are funded. One revolves around dirty data integration, it is funded by the ANR (Association National de Recherche).

The other strives to develop new techniques to analyze incomplete data. It is funded by the DataAI institute.

Two research axis are funded. One revolves around dirty data integration, it is funded by the ANR (Association National de Recherche).

The other strives to develop new techniques to analyze incomplete data. It is funded by the DataAI institute.

Presentation: Useful results from DirtyData for machine learning in Python on non-curated data

A presentation on practical results from the DirtyData project for data analysts that run machine learning in Python on non-curated data