Semapolis Project — Semantic Visual Analysis and 3D Reconstruction of Urban Environments

The goal of the SEMAPOLIS project (1/10/2013-30/09/2017) was to develop advanced large-scale image analysis and learning techniques to semantize city images and produce semantized 3D reconstructions of urban environments, including proper rendering. The Semapolis project was partly funded by the French National Research Agency (ANR) in the CONTINT 2013 program under reference ANR-13-CORD-0003.

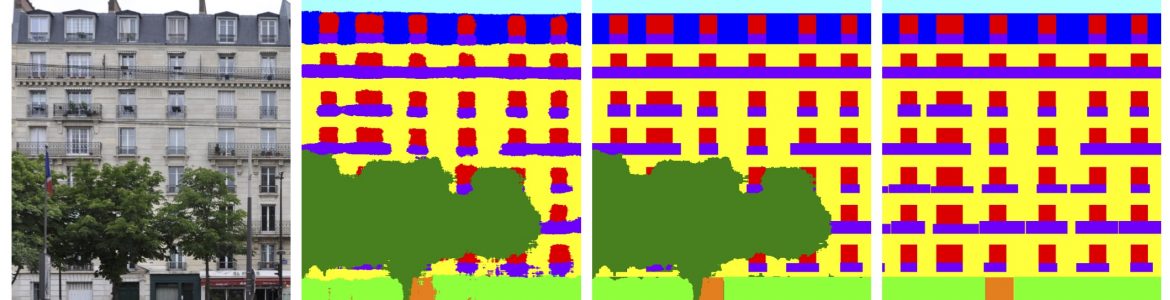

Geometric 3D models of existing cities have a wide range of applications, such as navigation in virtual environments and realistic sceneries for video games and movies. A number of players (Google, Microsoft, Apple) have started to produce such data. However, the models feature only plain surfaces, textured from available pictures. This limits their use in urban studies and in the construction industry, excluding in practice applications to diagnosis and simulation. Besides, geometry and texturing are often wrong when there are invisible or discontinuous parts, e.g., with occluding foreground objects such as trees, cars or lampposts, which are pervasive in urban scenes.

Initial Goals (2013)

We wish to go beyond by producing semantized 3D models, i.e., models which are not bare surfaces but which identify architectural elements such as windows, walls, roofs, doors, etc. The semantic priors will use to analyze images will also let us reconstruct plausible geometry and rendering for invisible parts. Semantic information is useful in a larger number of scenarios, including diagnosis and simulation for building renovation projects, accurate shadow impact taking into account actual window location, and more general urban planning and studies such as solar cell deployment. Another line of applications concerns improved virtual cities for navigation, with object-specific rendering, e.g., specular surfaces for windows. Models can also be made more compact, encoding object repetition (e.g., windows) rather than instances and replacing actual textures with more generic ones according to semantics; it allows cheap and fast transmission over low-bandwidth mobile phone networks, and efficient storage in GPS navigation devices.

The primary goal of the project is to make significant contributions and advance the state-of-the-art in the following areas:

- Learning for visual recognition: Novel large-scale machine learning algorithms will be developed to recognize various types of architectural elements and styles in images. These methods will be able to fully exploit very large amounts of image data while at the same time requiring a minimum amount of user annotation (weakly supervised learning).

- Shape grammar learning: Techniques will be developed to learn stochastic shape grammars from examples, and corresponding architecture style. Learnt grammars will be able to rapidly adapt to a wide variety of specific building types without the cost of manual expert design. Learnt grammar parameters will also lead to better parsing: faster, more accurate and more robust.

- Grammar-based inference: Innovative energy minimization approaches will be developed, leveraging on bottom-up cues, to efficiently cope with the exponential number of grammar interpretations, in particular in the context of grammars featuring rich architectural elements. A principled aggregation of the statistical visual properties will be designed, to accurately score parsing trials.

- Semantized 3D reconstruction: Robust original techniques will be developed to synchronize multiple-view 3D reconstruction with the semantic analysis, preventing inconsistencies such as unaligned roof and windows at facade angles.

- Semantic-aware rendering: Image-based rendering techniques will be developed benefiting from semantic classification to greatly improve visual quality regarding: improved depth synthesis, adaptive warping and blending, hole filling and region completion.

To validate our research, we will run experiments based on various kinds of data concerning large cities, in particular Paris (large-scale panoramas, smaller scale but denser and georeferenced terrestrial and aerial images, cadastral maps, construction date database), reconstructing and rendering an entire neighborhood.

Revisited Goals (2017)

Semapolis was designed in 2012-2013 on the basis of methods that were either well established at that time but still offer interesting prospects for improvement (e.g., graphical models) or relatively new and promising (e.g., image-based rendering, syntax analysis with grammars of form using reinforcement learning techniques).

But the successes and development of deep learning that followed the initial design of the project led us to significantly recompose the map of relevant methodological tools, without altering the purpose of the project. Semapolis researchers thus quickly began to explore general deep learning techniques and their uses for semantic urban analysis and reconstruction. As for visual navigation in virtual 3D environments, the project remained focused on image-based rendering (IBR), in particular with the use of rich inferred semantic information.

Major results were obtained in these domains, with some 40 international peer-reviewed publications, most of which in top-tier venues and half of which with publicly available code and data.